What’s New in Pandas 1.0 and TensorFlow 2.0

This article assumes some prior knowledge of these libraries. If you need a quick introduction, here are some great Medium articles for Pandas and TensorFlow.

Pandas 1.0.0

source: Pandas User Guide

(Released on January 29, 2020)

Pandas (short for Panel Data) is a Python package for data analysis and manipulation that was open-sourced from AQR Capital Management back in 2008. Its main feature is the DataFrame object which gives Python users a simple and standardized way to interact with tabular data. When I started using it, I thought it was odd that such a prominent and widely used software has not had a 1.0 release.

There are not many changes from 0.25.3 →1.0.0. Nonetheless, here are the major themes.

New Data Frame Column Types

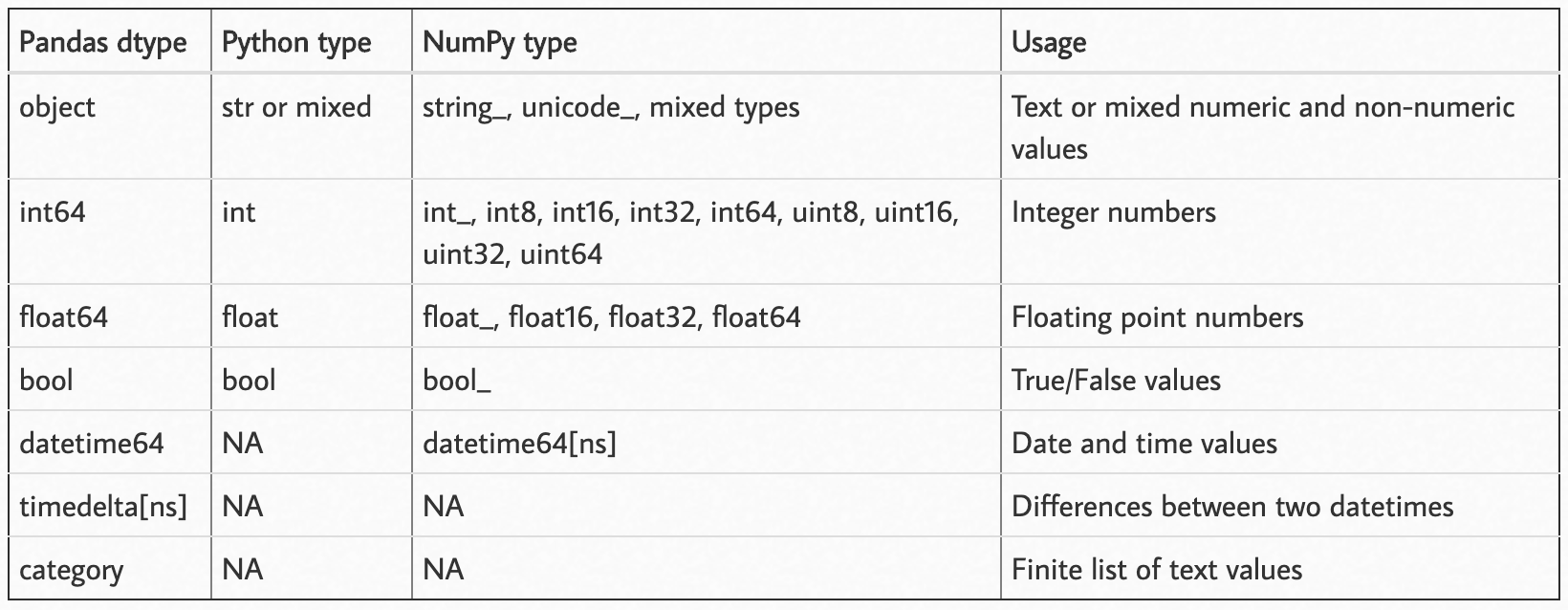

This is how Pandas handled data types before the 1.0 release:

source: https://pbpython.com/pandas_dtypes.html

Now we can add 2 more to that list: pd.StringDtype() and pd.BooleanDtype().

String Types: Before adding the string data type, Pandas string columns were encoded as NumPy object arrays. This was too general because anything can be stored in a Pandas object column. It was difficult to differentiate which columns were strings-and-only-strings, and the columns that contained a mixture of object types.

Now with the pd.StringDtype() column in Pandas you should be able to run all the python string methods on string columns like .lower(), .upper() , .replace() , etc.

Boolean Types: Before the new Pandas boolean type, boolean columns were encoded as bool type NumPy arrays. The only problem was that these arrays could not include missing values. The new pd.BooleanDtype() solves that problem.

Speaking of Missing Values…

Three different data types denote “missing” for Pandas objects: np.NaN for float types, np.NaT for datetime types, and None for other object types. Some people (including me) thought this was annoying and confusing.

Introducing pd.NA , the new catch-all Pandas type for all missing data. So how does it work?

For arithmetic operations; anything that is added, subtracted, concatenated, multiplied, divided by, etc. will return pd.NA.

In[1]: pd.NA + 72

Out[1]: <NA>

In[2]: pd.NA * "abc"

Out[2]: <NA>

Except for a few special cases, like if the number value which pd.NA could represent is irrelevant to the outcome.

In[3]: 1 ** pd.NA

Out[3]: 1

In[3]: pd.NA ** 0

Out[3]: 1

For comparisons, pd.NA acts as you would expect by propagating through the operation.

In[4]: pd.NA == 2.75

Out[4]: <NA>

In[5]: pd.NA < 65

Out[5]: <NA>

In[6]: pd.NA == pd.NA

Out[6]: <NA>

To test for missing data:

In[7]: pd.NA is pd.NA

Out[7]: True

In[7]: pd.isna(pd.NA)

Out[7]: True

For boolean operations, pd.NA follows three-valued logic (a.k.a Kleen logic) which is similar to SQL, R, and Julia.

In[8]: True | pd.NA

Out[8]: True

In[9]: False | pd.NA

Out[9]: <NA>

In[10]: True & pd.NA

Out[10]: <NA>

In[11]: False & pd.NA

Out[11]: False

If the value of pd.NA could change the outcome, then the operation returns <NA>, but if the value of pd.NA could have no possible effect on the outcome like in True | pd.NA and False & pd.NA then it will return the correct value.

Challenge question: what would this evaluate too? (the answer is at the end.)

In[12]: ((True | pd.NA) & True) | (False & (pd.NA | False))

Out[12]: ???

Note: this pd.NA value is experimental and behavior may change without warning. For more information check out Working with Missing Data section in the documentation.

Speed and Power

The main reason that C runs faster than Python is that C gets complied while Python is interpreted directly (note: there is slightly more to it). Pandas and NumPy use Cython to transform the Python code into C and get a performance boost.

But there is another compiler that is quickly gaining traction in the Python community, Numba. Numba allows you to compile Python code to almost achieve speeds of C and FORTRAN; without any of the complicated leg work of having an extra compile step or installing a C/C++ compiler. Just import the numba library and decorate your function with @numba.jit().

Example:

@numba.jit(nopython=True, parallel=True)

def my_function():

...

# Some really long and computationally intense task

return(the_result)

Pandas added an engine=’numba’ keyword to the apply() function to run your data manipulation operations with Numba. I think we will start to see more of this functionality throughout the Python data science ecosystem.

Visualizing Raw Data

Seeing is believing. Even before making graphs & plots with data, the first thing people do when they get data into a DataFrame object is run the .head() method to get an idea of what the data looks like.

You now have 2 more options to view your data easily.

# Create a DataFrame to print out

df = pd.DataFrame({

'some_numbers': [5.12, 8.66, 1.09],

'some_colors': ['blue', 'orange', 'maroon'],

'some_bools': [True, True, False],

'more_numbers': [2,5,8],

})

Beautiful JSON: There is now an indent= argument to the .to_json() method that will print out your data in a more human-readable way.

Instead of getting a text dump of output like this:

print(df.to_json(orient='records'))

[{"some_numbers":5.12,"some_colors":"blue","some_bools":true,"more_numbers":2},{"some_numbers":8.66,"some_colors":"orange","some_bools":true,"more_numbers":5},{"some_numbers":1.09,"some_colors":"maroon","some_bools":false,"more_numbers":8}]

You can get beautified JSON like this:

print(df.to_json(orient='records',indent=2))

[

{

"some_numbers":5.12,

"some_colors":"blue",

"some_bools":true,

"more_numbers":2

},

{

"some_numbers":8.66,

"some_colors":"orange",

"some_bools":true,

"more_numbers":5

},

{

"some_numbers":1.09,

"some_colors":"maroon",

"some_bools":false,

"more_numbers":8

}

]

Markdown: This is just another way to print out tables to the console. This produces a format that is easier to copy and paste into a markdown document. FYI, you need to install the tabulate package for this to work.

print(df.to_markdown())

| | some_numbers | some_colors | some_bools | more_numbers |

|---:|-------------:|:------------|:------------|-------------:|

| 0 | 5.12 | blue | True | 2 |

| 1 | 8.66 | orange | True | 5 |

| 2 | 1.09 | maroon | False | 8 |

Fun Facts

You used to be able to access the NumPy and datetime namespaces from within the Pandas package. Like so:

import pandas as pd

x = pd.np.array([1, 2, 3])

current_date = pd.datetime.date.today()

But you should not do that. This makes code confusing. Import libraries by themselves.

from datetime import date

import numpy as np

import pandas as pd

x = np.array([1,2,3])

current_date = date.today()

This namespace hack is finally deprecated. Check out the PR discussion here.

TensorFlow 2.0

(Released September 30, 2019)

TensorFlow is a deep-learning Python package that has been widely adopted throughout the industry. It started as an internal Google DeepMind project but was released to the public on November 9th, 2015. Version 1.0 came out in February of 2017. Now we’ve made it to 2.0.

TensorFlow gained most of its popularity for industrial use cases due to its portability and ease of deploying models into production-level software. But for research use cases, many people found that it lacked the ease of creating and running machine learning experiments compared to other frameworks like PyTorch (read more).

Whether you loved or hated TensorFlow 1.X, here’s what you need to know.

More Focus on Eager Execution

In TensorFlow 1.X you would normally build out the whole control flow of operations you want to perform, then execute everything at once by running a session.

This is not very Pythonic. Python users like being able to interactively run and interact with data as it goes. Eager execution was released in TensorFlow v1.7, now in 2.0 will be the main way that computation is handled. Here is an example.

Without eager execution.

In[1]: x = tf.constant([[1,0,0],[0,1,0],[0,0,1]], dtype=float)

In[2]: print(x)

Out[2]: Tensor("Const:0", shape=(3, 3), dtype=float32)

With eager execution.

In[1]: x = tf.constant([[1,0,0],[0,1,0],[0,0,1]], dtype=float)

In[2]: print(x)

Out[2]: Tensor([[1,0,0]

[0,1,0]

[0,0,1]], shape=(3, 3), dtype=float32)

How much easier is it to understand and debug what is going on when you can actually see the numbers you are working with? This makes TensorFlow more compatible with native Python control flow, therefore easier to create and understand. (Read more)

Functional, but still Portable

Part of the reason ML practitioners in industry settings adopted TensorFlow earlier on was its portability. You could build out the logic (or execution graph) in Python, export it, and seamlessly run it with C, JavaScript, etc.

TensorFlow heard the people’s call to make the interface more Python native but kept the ease of transporting the code to other execution environments with the @tf.function decorator. This decorator uses AutoGraph to automatically translate your python functions into a TensorFlow execution graph.

for/while->[tf.while_loop](https://www.tensorflow.org/api_docs/python/tf/while_loop)(breakandcontinueare supported)if->[tf.cond](https://www.tensorflow.org/api_docs/python/tf/cond)for _ in dataset->dataset.reduce

# TensorFlow 1.X

outputs = session.run(f(placeholder),

feed_dict={placeholder: input})

# TensorFlow 2.0

outputs = f(input)

Native support of Keras

Keras was originally developed as a higher level deep-learning API that wrapped around TensorFlow, Theano, or CNTK. From the front page:

“It was developed with a focus on enabling fast experimentation. Being able to go from idea to result with the least possible delay is key to doing good research.”

TensorFlow decided to natively support the Keras API within the tf namespace. This gives users a modular approach to model experimentation and solves the problem that “TensorFlow is not as good for research”.

Keras still exists as a separate library but recommends that users who utilize the TensorFlow backend switch to tf.Keras. So now when people say they are using “Keras” I do not know if it is tf.Keras or the original Keras … oh well.

API Refactor

Manually upgrading your old code from v1.X to v2.X is not going to be easy. But the TensorFlow developers made it less painful by creating a script to automatically reorder arguments, change default parameters, and switch module namespaces.

From the guides:

Many APIs are either gone or moved in TF 2.0. Some of the major changes include removing

_tf.app_,_tf.flags_, and_tf.logging_in favor of the now open-source absl-py, rehoming projects that lived in_tf.contrib_, and cleaning up the main_tf.*_namespace by moving lesser used functions into subpackages like_tf.math_. Some APIs have been replaced with their 2.0 equivalents -_tf.summary_,_tf.keras.metrics_, and_tf.keras.optimizers_. The easiest way to automatically apply these renames is to use the v2 upgrade script.

Conclusion

Pandas is deprecating the use of NumPy and datetime from within its namespace and suggesting that users import them directly. But TensorFlow is recommending to use the Keras that is inside of Tensorflow and not import it directly.

I hope you found this useful. Let me know if there are any big features that you think should be included.

Thanks for reading!

Suggest:

☞ TensorFlow.js Bringing Machine Learning to the Web and Beyond

☞ Putting TensorFlow Models Into Production

☞ Introduction to Machine Learning with TensorFlow.js

☞ High-Level APIs in TensorFlow 2.0

☞ TensorFlow 2.0 Full Tutorial - Python Neural Networks for Beginners