Complete Guide to Time Series Forecasting in Python

Math for the Seasons

An explanation of how to leverage python libraries to quickly forecast seasonal time series data.

Hi! I’m Jose Portilla and I teach Python, Data Science and Machine Learning online to over 500,000 students! If you’re interested in learning more about how to do types of analysis and visualization in this blog posts, you can check out discount coupon links to my courses on Python for Data Science and Machine Learning and using Python for Financial Analysis and Algorithmic Trading.

Alright, on to the discussion of time series!

Time Series Basics

Let’s first discuss what a time series is and what it’s not. We’ll also talk about what kinds of time series are suitable for ARIMA based forecasting models.

Time Series data is experimental data that has been observed at different points in time (usually evenly spaced, like once a day). For example, the data of airline ticket sales per day is a time series. However, just because a series of events has a time element does not automatically make it a time series, such as the dates of major airline disasters, which are randomly spaced and are not time series. These types of random processes are known as point process.

Time Series have several key features such as trend, seasonality, and noise.

What we’ll be doing in this article is analyzing these features of a time series data set, and then seeing if we can use mathematical models to forecast into the future. We’ll also see how we can split our original time series data set to evaluate how well our model predicts the future.

Forecasting with ARIMA

“Prediction is very difficult, especially about the future”.

Forecasting is the process of making predictions of the future, based on past and present data. One of the most common methods for this is the ARIMA model, which stands for AutoRegressive Integrated Moving Average.

In an ARIMA model there are 3 parameters that are used to help model the major aspects of a times series: seasonality, trend, and noise. These parameters are labeled **p,d,**and q.

p is the parameter associated with the auto-regressive aspect of the model, which incorporates past values. For example, forecasting that if it rained a lot over the past few days, you state its likely that it will rain tomorrow as well.

d is the parameter associated with the integrated part of the model, which effects the amount of differencing to apply to a time series. You can imagine an example of this as forecasting that the amount of rain tomorrow will be similar to the amount of rain today, if the daily amounts of rain have been similar over the past few days.

q is the parameter associated with the moving average part of the model.

If our model has a seasonal component (we’ll show this in more detail later), we use a seasonal ARIMA model (SARIMA). In that case we have another set of parameters: P,D, and Q which describe the same associations as p,d, and q, but correspond with the seasonal components of the model.

The methods we will employ in this blog example will only take in data from a uni-variate time series. That means we really are only considering the relationship between the y-axis value the x-axis time points. We’re not considering outside factors that may be effecting the time series.

A common mistake beginners make is they immediately start to apply ARIMA forecasting models to data that has many outside factors, such as stock prices or a sports team’s performance. While ARIMA can be a powerful and relevant tool for times series related to those topics, if you only use it by itself and don’t account for outside factors, such as a CEO getting fired or an injury on the team, you won’t have good results. Keep this in mind as you begin to apply these concepts to your own data sets.

Variation

One of the most important features of a time series is variation. Variations are patterns in the times series data. A time series that has patterns that repeat over known and fixed periods of time is said to have seasonality. Seasonality is a general term for variations that periodically repeat in data. In general, we think of variations as 4 categories.: Seasonal, Cyclic, Trend, and Irregular fluctuations.

Seasonal variation is usually defined as variation that is annual in period, such as swimsuit sales being lower in winter and higher in summer. Cyclic Variation is a variation that occurs at other fixed periods, such as the daily variation in temperature. Both Seasonal and Cyclic variation would be examples of seasonality in a time series data set.

Trends are long-term changes in the mean level, relative to the number of observations.

Our Data

Let’s explore the Industrial production of electric and gas utilities in the United States, from the years 1985–2018, with our frequency being Monthly production output.

You can access this data here: https://fred.stlouisfed.org/series/IPG2211A2N

This data measures the real output of all relevant establishments located in the United States, regardless of their ownership, but not those located in U.S. territories.

Processing the Data

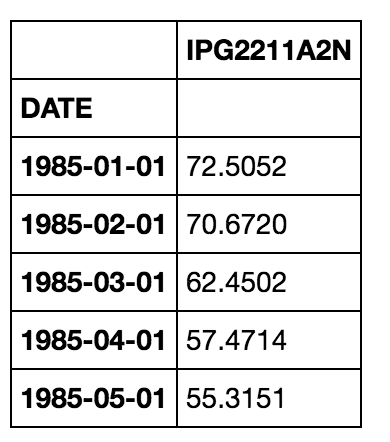

We’ll need to do some quick processing to convert this data to have a time series index. Luckily Pandas makes this easy, let’s quickly check the head of the data (the first 5 rows) to see what default format it comes in:

import pandas as pd

data = pd.read_csv(“Electric_Production.csv”,index_col=0)

data.head()

Right now our index is actually just a list of strings that look like a date, we’ll want to adjust these to be timestamps, that way our forecasting analysis will be able to interpret these values.

data.index = pd.to_datetime(data.index)

Let’s also rename this column since its hard to remember what “IPG2211A2N” code stands for:

data.columns = ['Energy Production']

We can plot out this data quickly with cufflinks and plotly:

import plotly.plotly as ply

import cufflinks as cf

data.iplot(title="Energy Production Jan 1985--Jan 2018")

Giving us this resulting plot:

Decomposition

Here we can see there is an upward trend. We can use statsmodels to perform a decomposition of this time series. The decomposition of time series is a statistical task that deconstructs a time series into several components, each representing one of the underlying categories of patterns. With statsmodels we will be able to see the trend, seasonal, and residual components of our data.

We can use an additive model when it seems that the trend is more linear and the seasonality and trend components seem to be constant over time (e.g. every year we add 100 units of energy production). A multiplicative model is more appropriate when we are increasing (or decreasing) at a non-linear rate (e.g. each year we double the amount of energy production everyyear).

Based off the previous chart, it looks like the trend in these earlier days is slightly increasing at a higher rate than just linear (although it is a bit hard to tell from this one plot, we can always experiment with additive versus multiplicative methods.).

Again, Python and Statsmodels make this task incredibly easy in just a few lines of code:

from plotly.plotly import plot_mpl

from statsmodels.tsa.seasonal import seasonal_decompose

result = seasonal_decompose(data, model=’multiplicative’)

fig = result.plot()

plot_mpl(fig)

Giving us this plot below:

Not bad for what is essentially one line of code (not counting imports and plot calls)!

From the plot above we can clearly see the seasonal component of the data, and we can also see the separated upward trend of the data.

Trends can be upward or downward, and can be linear or non-linear. It is important to understand your data set to know whether or not a significant period of time has passed to identify an actual trend.

Irregular fluctuations are abrupt changes that are random and unpredictable.

Performing the Seasonal ARIMA

Now that we’ve analyzed the data, we can clearly see we have a time series with a seasonal component, so it make sense to use a Seasonal ARIMA model. In order to do this we will need to choose p,d,q values for the ARIMA, and P,D,Q values for the Seasonal component.

There are many ways to choose these values statistically, such as looking at auto-correlation plots, correlation plots, domain experience, etc.

One simple approach is to perform a grid search over multiple values of p,d,q,P,D,and Q using some sort of performance criteria. The Akaike information criterion (AIC) is an estimator of the relative quality of statistical models for a given set of data. Given a collection of models for the data, AIC estimates the quality of each model, relative to each of the other models.

The AIC value will allow us to compare how well a model fits the data and takes into account the complexity of a model, so models that have a better fit while using fewer features will receive a better (lower) AIC score than similar models that utilize more features.

The pyramid-arima library for Python allows us to quickly perform this grid search and even creates a model object that you can fit to the training data.

This library contains an auto_arima function that allows us to set a range of p,d,q,P,D,and Q values and then fit models for all the possible combinations. Then the model will keep the combination that reported back the best AIC value.

from pyramid.arima import auto_arima

stepwise_model = auto_arima(data, start_p=1, start_q=1,

max_p=3, max_q=3, m=12,

start_P=0, seasonal=True,

d=1, D=1, trace=True,

error_action='ignore',

suppress_warnings=True,

stepwise=True)

print(stepwise_model.aic())

The resulting best model parameters gave us an AIC value of 1771.29.

We now have a model that we can fit, in order to do this , we will need training data and test data.

Train Test Split

We can then fit the stepwise_model object to a training data set. Because this is a time series forecast, we will “chop off” a portion of our latest data and use that as the test set. Then we will train on the rest of the data and forecast into the future. Afterwards we can compare our forecast with the section of data we chopped off.

We’ll train on 20 years of data, from the years 1985–2015 and test our forecast on the years after that and compare it to the real data:

train = data.loc['1985-01-01':'2016-12-01']

test = data.loc['2017-01-01':]

Train the Model

We can then train the model by simply calling .fit on the stepwise model and passing in the training data:

stepwise_model.fit(train)

Evaluation

Now that the model has been fitted to the training data, we can forecast into the future. Recall that our test data set is from 2015–01–01 all the way to 2018–01–01. So if we check the length of our test data we get 37 rows, or 37 time periods. That is the value we will use for our .predict() method call:

future_forecast = stepwise_model.predict(n_periods=37)

# This returns an array of predictions:

>>>print(future_forecast)

array([ 114.35302037, 105.67472349, 91.62172016, 93.11965624,

103.13943782, 112.0750119 , 110.28775882, 100.3846244 ,

92.76377402, 96.56146867, 110.15807481, 122.16905229,

111.76255057, 102.1074658 , 90.72177437, 92.21641046,

103.29671997, 112.53381746, 111.79663986, 101.28342664,

92.21562554, 95.42427613, 109.83787975, 118.78803148,

108.06504426, 101.03926634, 89.8586184 , 91.90975603,

102.9426644 , 112.42626585, 111.2655725 , 100.67041402,

92.32953027, 95.54048842, 110.86398308, 120.63508802,

108.74454694])

Let’s reorganize this set of predictions by creating a dataframe that contains our future forecast and then concatenating that with the original data.

We can then plot this to view how well our prediction forecast matched up with the test set for which we have the real data:

future_forecast = pd.DataFrame(future_forecast,index = test.index,columns=[‘Prediction’])

pd.concat([test,future_forecast],axis=1).iplot()

We can also just compare this to the entire data set to get a larger picture of the context of our prediction.

Next Steps

Now that we’ve evaluated our data on the test set and our satisfied with the performance, the next step would be to refit our model to our entire data set and then forecast into the real future.

30s ad

☞ Complete Python Programming with examples for beginners

☞ Selenium WebDriver and Python: WebTest Automation Course

☞ Interactive Data Visualization with Python & Bokeh

☞ Master the Python Interview | Real Banks&Startups questions

☞ Ensemble Machine Learning in Python: Random Forest, AdaBoost

Suggest:

☞ Python Machine Learning Tutorial (Data Science)

☞ Python Tutorial for Data Science

☞ Machine Learning Zero to Hero - Learn Machine Learning from scratch

☞ Complete Python Tutorial for Beginners (2019)