9 Things You Should Know About TensorFlow

This morning I found myself summarizing my favorite bits from a talk that I enjoyed at Google Cloud Next in San Francisco, What’s New with TensorFlow?

Then I thought about it for a moment and couldn’t see a reason not to share my super-short summary with you (except maybe that you might not watch the video — you totally should check it out, the speaker is awesome) so here goes…

Discovered with the help of TensorFlow, the planet Kepler-90i makes the Kepler-90 system the only other system we know of that has eight planets in orbit around a single star. No system has been found with more than eight planets, so I guess that means we’re tied with Kepler-90 for first place (for now).

#1 It’s a powerful machine learning framework

TensorFlow is a machine learning framework that might be your new best friend if you have a lot of data and/or you’re after the state-of-the-art in AI: deep learning. Neural networks. Big ones. It’s not a data science Swiss Army Knife, it’s the industrial lathe… which means you can probably stop reading if all you want to do is put a regression line through 20-by-2 spreadsheet.

But if big is what you’re after, get excited. TensorFlow has been used to go hunting for new planets, prevent blindness by helping doctors screen for diabetic retinopathy, and help save forests by alerting authorities to signs of illegal deforestation activity. It’s what AlphaGo and Google Cloud Vision are built on top of and it’s yours to play with. TensorFlow is open source, you can download it for free and get started immediately.

#2 The bizarre approach is optional

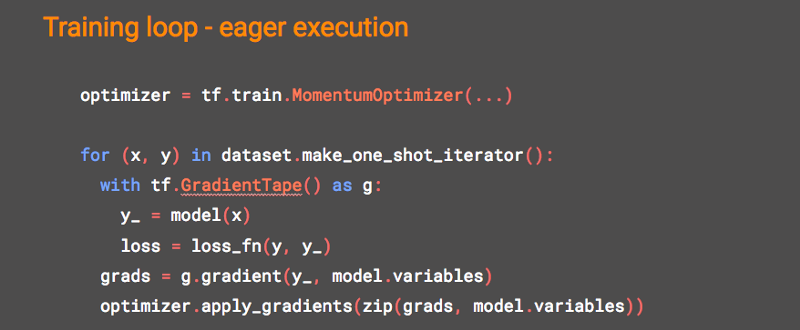

I’m head over heels for TensorFlow Eager.

If you tried TensorFlow in the old days and ran away screaming because it forced you to code like an academic/alien instead of like a developer, come baaaack!

TensorFlow eager execution lets you interact with it like a pure Python programmer: all the immediacy of writing and debugging line-by-line instead of holding your breath while you build those huge graphs. I’m a recovering academic myself (and quite possibly an alien), but I’ve been in love with TF eager execution since it came out. So eager to please!

#3 You can build neural networks line-by-line

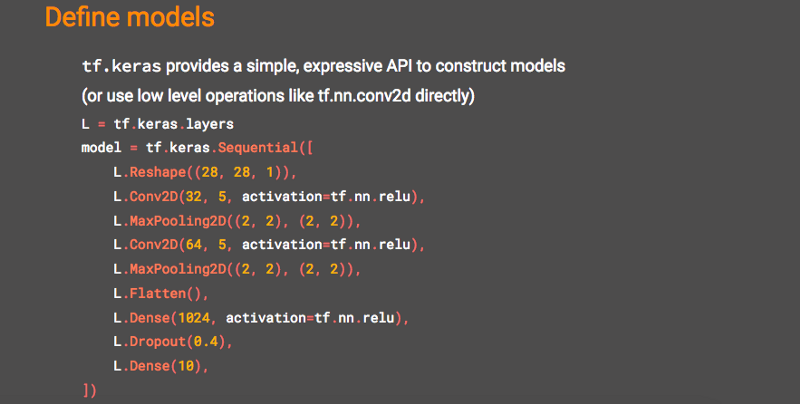

Keras + TensorFlow = easier neural network construction!

Keras is all about user-friendliness and easy prototyping, something old TensorFlow sorely craved more of. If you like object oriented thinking and you like building neural networks one layer at a time, you’ll love tf.keras. In just the few lines of code below, we’ve created a sequential neural network with the standard bells and whistles like dropout (remind me to wax lyrical about my metaphors for dropout sometime, they involve staplers and the flu).

Oh, you like puzzles, do you? Patience. Don’t think too much about staplers.

#4 It’s not only Python

Okay, you’ve been complaining about TensorFlow’s Python monomania for a while now. Good news! TensorFlow is not just for Pythonistas anymore. It now runs in many languages, from R to Swift to JavaScript.

#5 You can do everything in the browser

Speaking of JavaScript, you can train and execute models in the browser with TensorFlow.js. Go nerd out on the cool demos, I’ll still be here when you get back.

#6 There’s a Lite version for tiny devices

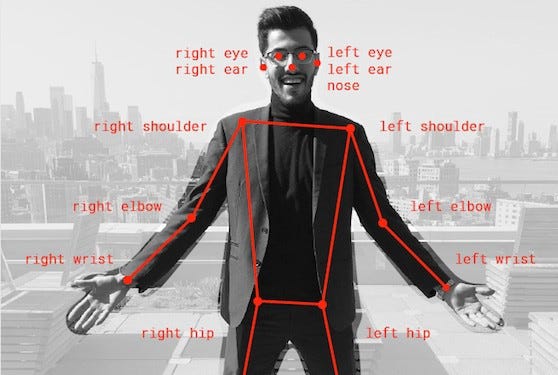

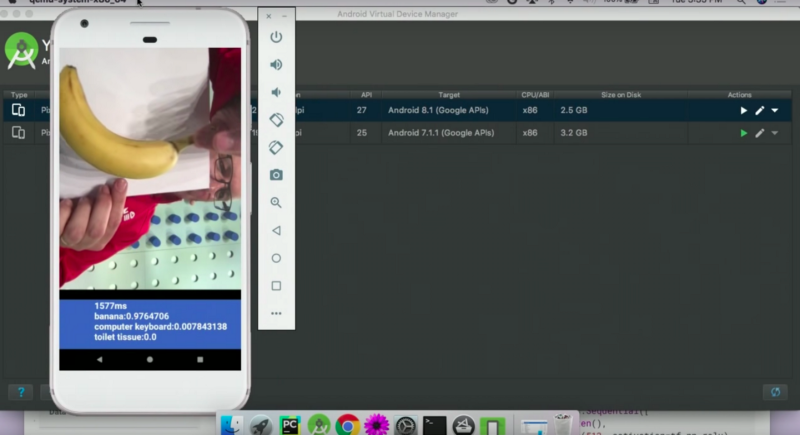

Got a clunker desktop from a museum? Toaster? (Same thing?) TensorFlow Lite brings model execution to a variety of devices, including mobile and IoT, giving you more than a 3x boost in inference speedup over original TensorFlow. Yes, now you can get machine learning on your Raspberry Pi or your phone. In the talk, Laurence does a brave thing by live-demoing image classification on an Android emulator in front of thousands… and it works.

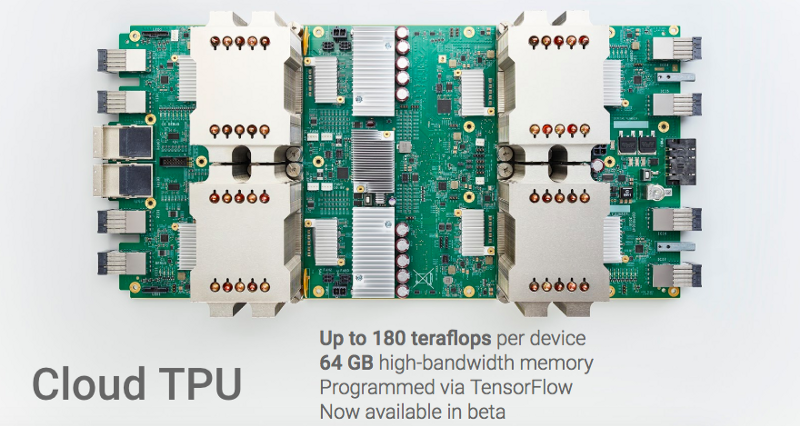

#7 Specialized hardware just got better

If you’re tired of waiting for your CPU to finish churning through your data to train your neural network, you can now get your hands on hardware specially designed for the job with Cloud TPUs. The T is for tensor. Just like TensorFlow… coincidence? I think not! A few weeks ago, Google announced version 3 TPUs in alpha.

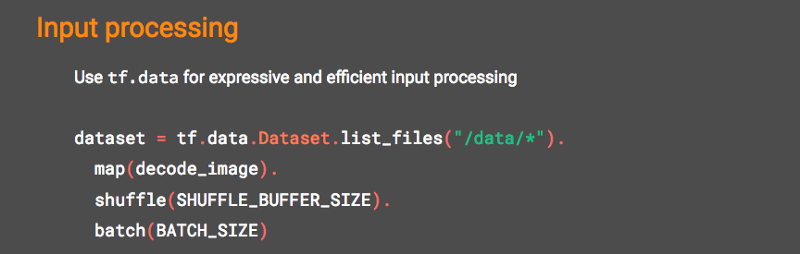

#8 The new data pipelines are much improved

What’s that you’re doing with numpy over there? In case you wanted to do it in TensorFlow but then rage-quit, the tf.data namespace now makes your input processing in TensorFlow more expressive and efficient. tf.data gives you fast, flexible, and easy-to-use data pipelines synchronized with training.

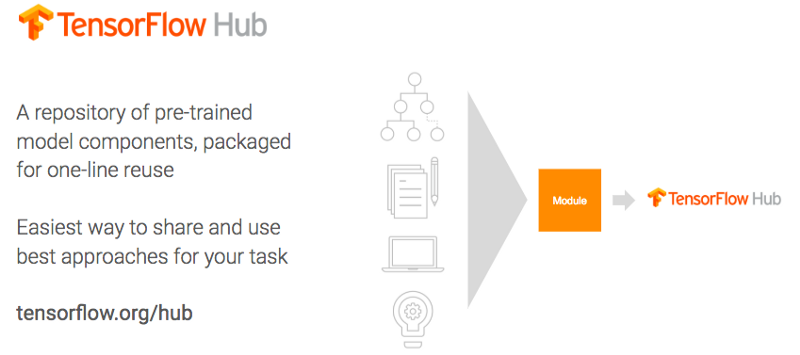

#9 You don’t need to start from scratch

You know what’s not a fun way to get started with machine learning? A blank new page in your editor and no example code for miles. With TensorFlow Hub, you can engage in a more efficient version of the time-honored tradition of helping yourself to someone else’s code and calling it your own (otherwise known as professional software engineering).

TensorFlow Hub is a repository for reusable pre-trained machine learning model components, packaged for one-line reuse. Help yourself!

While we’re on the subject of community and not struggling alone, you might like to know that TensorFlow just got an official YouTube channel and blog.

That concludes my summary, so here’s the full talk to entertain you for the next 42 minutes.

30s ad

☞ Advanced TensorFlow Models Masterclass with Python and Keras

☞ The Complete TensorFlow Masterclass: Machine Learning Models

☞ Mobile Machine Learning for Android: TensorFlow & Python

☞ TensorFlow and the Google Cloud ML Engine for Deep Learning

☞ Build Next-Level Apps w/ TensorFlow, Python & Sketch

Suggest:

☞ Machine Learning Zero to Hero - Learn Machine Learning from scratch

☞ Introduction to Machine Learning with TensorFlow.js

☞ TensorFlow.js Bringing Machine Learning to the Web and Beyond

☞ Python Machine Learning Tutorial (Data Science)

☞ Platform for Complete Machine Learning Lifecycle

☞ Top Big Data Technologies | Big Data Tools Tutorial | Big Data Hadoop Training