Why should I use a Reverse Proxy if Node.js is Production-Ready?

The year was 2012. PHP and Ruby on Rails reigned as the supreme server-side technologies for rendering web applications. But, a bold new contender took the community by storm — one which managed to handle 1M concurrent connections. This technology was none other than Node.js and has steadily increased in popularity ever since.

Unlike most competing technologies of the time, Node.js came with a web server built in. Having this server meant developers could bypass a myriad of configuration files such as php.ini and a hierarchical collection of

.htaccess files. Having a built-in web server also afforded other conveniences, like the ability to process files as they were being uploaded and the ease of implementing WebSockets.

Every day Node.js-powered web applications happily handle billions of requests. Most of the largest companies in the world are powered in some manner by Node.js. To say that Node.js is Production-Ready is certainly an

understatement. However, there’s one piece of advice which has held true since Node.js’s inception: one should not directly expose a Node.js process to the web and should instead hide it behind a reverse proxy. But, before we look at the reasons why we would want to use a reverse proxy, let’s first look at what one is.

What is a Reverse Proxy?

A reverse proxy is basically a special type of web server which receives requests, forwards them to another HTTP server somewhere else, receives a reply, and forwards the reply to the original requester.

A reverse proxy doesn’t usually send the exact request, though. Typically it will modify the request in some manner. For example, if the reverse proxy lives at www.example.org:80, and is going to forward the request to

ex.example.org:8080, it will probably rewrite the original Host header to match that of the target. It may also modify the request in other ways, such as cleaning up a malformed request or translating between protocols.

Once the reverse proxy receives a response it may then translate that response in some manner. Again, a common approach is to modify the Host header to match the original request. The body of the requests can be changed as well. A common modification is to perform gzip compression on the response. Another common change is to enable HTTPS support when the underlying service only speaks HTTP.

Reverse proxies can also dispatch incoming requests to multiple backend instances. If a service is exposed at api.example.org, a reverse proxy could forward requests to api1.internal.example.org, api2, etc.

There are many different reverse proxies out there. Two of the more popular ones are Nginx and HAProxy. Both of these tools are able to perform gzip compression and add HTTPS support, and they specialize in other areas as well. Nginx is the more popular of the two choices, and also has some other

beneficial capabilities such as the ability to serve static files from a filesystem, so we’ll use it as an example throughout this article.

Now that we know what a reverse proxy is, we can now look into why we would want to make use of one with Node.js.

Why should I use a Reverse Proxy?

SSL Termination

SSL termination is one of the most popular reasons one uses a reverse proxy. Changing the protocol of ones application from http to https does take a little more work than appending an s. Node.js itself is able to perform the necessary encryption and decryption for https, and can be configured to read the necessary certificate files.

However, configuring the protocol used to communicate with our application, and managing ever-expiring SSL certificates, is not really something that our application needs to be concerned about. Checking certificates into a codebase would not only be tedious, but also a security risk. Acquiring certificates from a central location upon application startup also has its risks.

For this reason it’s better to perform SSL termination outside of the application, usually within a reverse proxy. Thanks to technologies like certbot by Let’s Encrypt, maintaining certificates with Nginx is as easy as setting up a cron job. Such a job can automatically install new certificates and dynamically reconfigure the Nginx process. This is a much less disruptive process then, say, restarting each Node.js application instance.

Also, by allowing a reverse proxy to perform SSL termination, this means that only code written by the reverse proxy authors has access to your private SSL certificate. However, if your Node.js application is handling SSL, then every single third party module used by your application — even potentially malicious modules — will have access to your private SSL certificate.

gzip Compression

gzip compression is another feature which you should offload from the application to a reverse proxy. gzip compression policies are something best set at an organization level, instead of having to specify and configure for each application.

It’s best to use some logic when deciding what to gzip. For example, files which are very small, perhaps smaller than 1kb, are probably not worth compressing as the gzip compressed version can sometimes be larger, or the CPU overhead of having the client decompress the file might not be worth it. Also, when dealing with binary data, depending on the format it might not benefit from compression. gzip is also something which can’t be simply enabled or disabled, it requires examining the incoming Accept-Encoding header for compatible compression algorithms.

Clustering

JavaScript is a single-threaded language, and accordingly, Node.js has traditionally been a single-threaded server platform (though, the currently-experimental worker thread support available in Node.js v10 aims to change this). This means getting as much throughput from a Node.js application as possible requires running roughly the same number of instances as there are CPU cores.

Node.js comes with a built-in cluster module which can do just that. Incoming HTTP requests will be made to a master process then be dispatched to cluster workers.

However, dynamically scaling cluster workers would take some effort. There’s also usually added overhead in running an additional Node.js process as the dispatching master process. Also, scaling processes across different machines is something that cluster can’t do.

For these reasons it’s sometimes better to use a reverse proxy to dispatch requests to running Node.js processes. Such reverse proxies can be dynamically configured to point to new application processes as they arrive. Really, an application should just be concerned with doing its own work, it shouldn’t be concerned with managing multiple copies and dispatching requests.

Enterprise Routing

When dealing with massive web applications, such as the ones built by multi-team enterprises, it’s very useful to have a reverse proxy for determining where to forward requests to. For example, requests made to example.org/search/* can be routed to the internal search application while other requests made to example.org/profile/* can be dispatched to the internal profile application.

Such tooling allows for other powerful features such as sticky sessions, Blue/Green deployments, A/B testing, etc. I’ve personally worked in a codebase where such logic was performed within the application and this approach made the application quite hard to maintain.

Performance Benefits

Node.js is highly malleable. It is able to serve static assets from a filesystem, perform gzip compression with HTTP responses, comes with built-in support for HTTPS, and many other features. It even has the ability to run multiple instances of an application and perform its own request dispatching, by way of the cluster module.

And yet, ultimately it is in our best interest to let a reverse proxy handle these operations for us, instead of having our Node.js application do it. Other than each of the reasons listed above, another reason for wanting to do these operations outside of Node.js is due to efficiency.

SSL encryption and gzip compression are two highly CPU-bound operations. Dedicated reverse proxy tools, like Nginx and HAProxy, typically perform these operations faster than Node.js. Having a web server like Nginx read static content from disk is going to be faster than Node.js as well. Even clustering can sometimes be more efficient as a reverse proxy like Nginx will use less memory and CPU than that of an additional Node.js process.

But, don’t take our word for it. Let’s run some benchmarks!

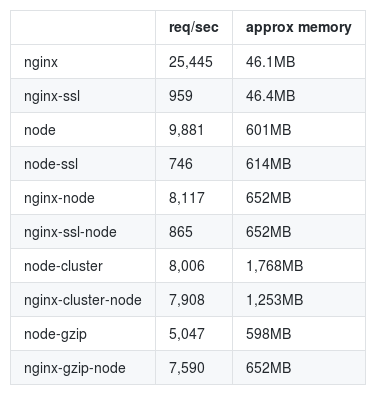

The following load testing was performed using siege. We ran the command with a concurrency value of 10 (10 simultaneous users making a request) and the command would run until 20,000 iterations were made (for 200,000 overall requests).

To check the memory we run the command pmap <pid> | grep total a few times throughout the lifetime of the benchmark then average the results. When running Nginx with a single worker thread there end up being two instances running, one being the master and the other being the worker. We then sum the two values. When running a Node.js cluster of 2 there will be 3 processes, one being the master and the other two being workers. The approx memory column in the following table is a total sum of each Nginx and Node.js process for the given test.

Here are the results of the benchmark:

In the node-cluster benchmark we use 2 workers. This means there are 3 Node.js processes running: 1 master and 2 workers. In the nginx-cluster-node benchmark we have 2 Node.js processes running. Each Nginx test has a single Nginx master and a single Nginx worker process. Benchmarks involve reading a file from disk, and neither Nginx nor Node.js were configured to cache the file in memory.

Using Nginx to perform SSL termination for Node.js results in a throughput increase of ~16% (749rps to 865rps). Using Nginx to perform gzip compression results in a throughput increase of ~50% (5,047rps to 7,590rps). Using Nginx to manage a cluster of processes resulted in a performance penalty of ~-1% (8,006rps to 7,908rps), probably due to the overhead of passing an additional request over the loopback network device.

Essentially the memory usage of a single Node.js process is ~600MB, while the memory usage of an Nginx process is around ~50MB. These can fluctuate a little depending on what features are being used, for example, Node.js uses an additional ~13MB when performing SSL termination, and Nginx uses an additional ~4MB when used as a reverse proxy verse serving static content from the filesystem. An interesting thing to note is that Nginx uses a consistent amount of memory throughout its lifetime. However, Node.js constantly fluctuates due to the garbage-collecting nature of JavaScript.

Here are the versions of the software used while performing this benchmark:

- Nginx:

1.14.2 - Node.js:

10.15.3 - Siege:

3.0.8

The tests were performed on a machine with 16GB of memory, an i7-7500U CPU 4x2.70GHz, and Linux kernel 4.19.10. All the necessary files required to recreate the above benchmarks are available here:

IntrinsicLabs/nodejs-reverse-proxy-benchmarks.

Simplified Application Code

Benchmarks are nice and all, but in my opinion the biggest benefits of offloading work from a Node.js application to a reverse proxy is that of code simplicity. We get to reduce the number of lines of potentially-buggy imperative application code and exchange it for declarative configuration. A common sentiment amongst developers is that they’re more confident in code written by an external team of engineers — such as Nginx — than in code written by themselves.

Instead of installing and managing gzip compression middleware and keeping it up-to-date across various Node.js projects we can instead configure it in a single location. Instead of shipping or downloading SSL certificates and either re-acquiring them or restarting application processes we can instead use existing certificate management tools. Instead of adding conditionals to our application to check if a process is a master or worker we can offload this to another tool.

A reverse proxy allows our application to focus on business logic and forget about protocols and process management.

This article was written by me, Thomas Hunter II. I work at a company called

Intrinsic (btw, we’re hiring!) where we specialize in writing software for securing Node.js applications. We currently have a product which follows the Least Privilege model for securing applications. Our product proactively protects Node.js applications from attackers, and is surprisingly easy to implement. If you are looking for a way to secure your Node.js applications, give us a shout at [email protected].

Recommended Reading

☞ How to Build a Simple Message Queue in Node.js and RabbitMQ

☞ Getting started with Node js Modules

☞ Build a Node.js App With Sequelize

Suggest:

☞ JavaScript Programming Tutorial Full Course for Beginners

☞ Learn JavaScript - Become a Zero to Hero

☞ Javascript Project Tutorial: Budget App

☞ E-Commerce JavaScript Tutorial - Shopping Cart from Scratch